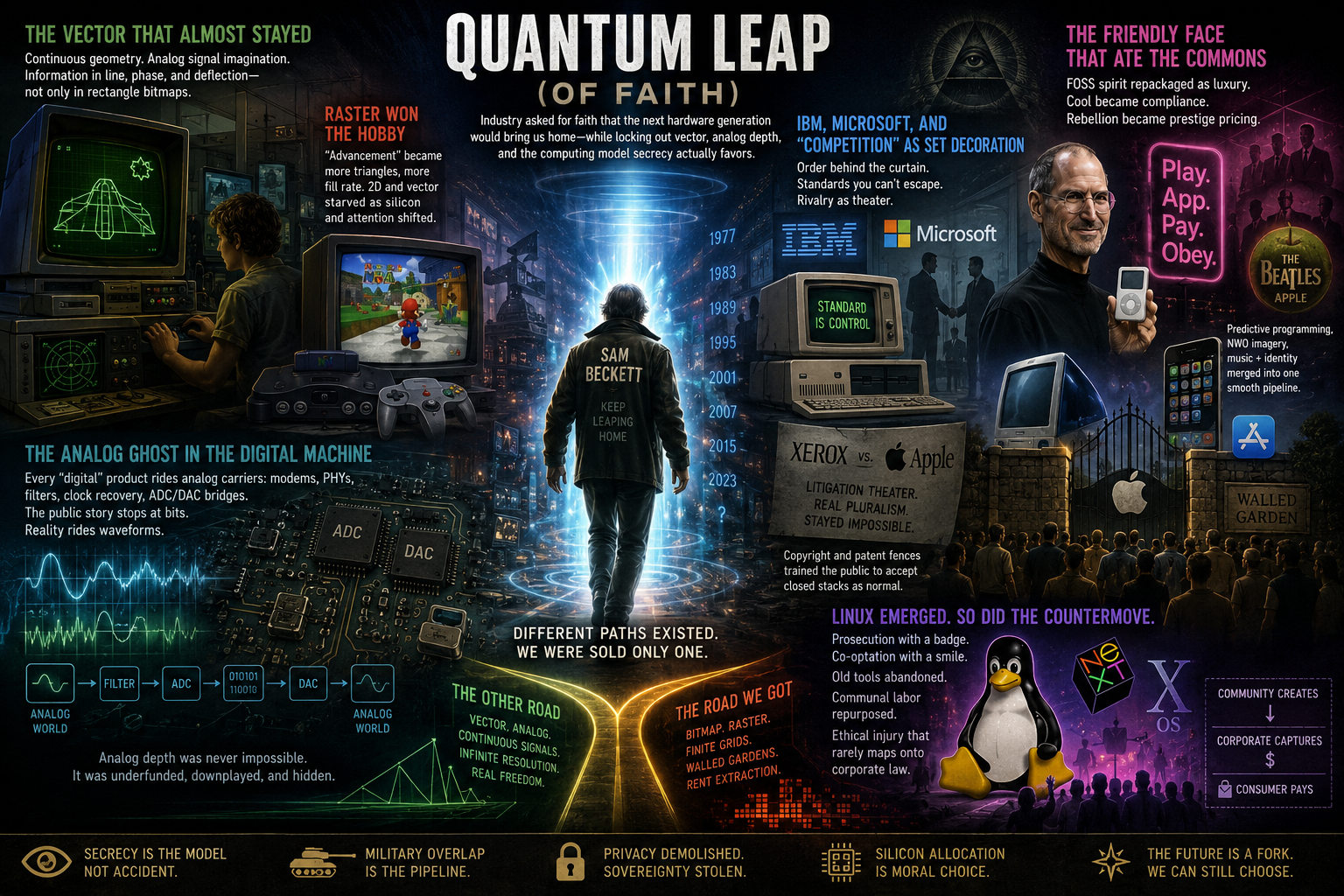

Quantum Leap (of faith)

Industry asked for faith that the next hardware generation would bring us home—while locking out vector, analog depth, and the computing model secrecy actually favors

In Quantum Leap, Sam Beckett steps through a beam that puts him in a new body to solve a new problem. Each leap asks for faith: maybe the next jump will bring him home. Consumer technology copied that emotional contract. We handed manufacturers and platforms total trust—no meaningful audit of the stack, no serious public map of what analog channels ride underneath the digital story—and we still narrate it as competition and progress. The fork toward bitmap CRT, raster monopoly, and walled gardens was never the only road; it was the road that preserved order, surveillance geometry, and revenue concentration, while vector, analog signal depth, and a different theory of memory (including what I call the real quantum computer) were left to atrophy in public—or developed where the public narrative does not reach.

The price tag on the personal computer

Since general-purpose computers became mass infrastructure, the pattern has been obvious: secrecy, military overlap, privacy demolition, and a long tail of secondary harms. Listing every subsidiary insult would add bulk without changing the thesis. The important claim is structural: the industry did not drift into this shape by accident. Alternative industrial paths existed in the same decades; many were visible before they were retired.

The vector that almost stayed

Arcades and labs in the 1980s still flaunted vector displays: electron beams drawing continuous geometry, a natural home for analog-signal imagination—information carried in line, phase, and deflection, not only in rectangle bitmaps. The consumer story pivoted hard toward CRT raster and the bitmap as the default real. Vector would not have solved every problem; the competitive field was narrowed so that high-resolution 3D raster became the only legitimate arena of “advance,” with consequences we still pay for thermally and economically.

Another fork worth naming explicitly: storing and addressing information in the infinite-resolution degrees of freedom of a continuous signal—the frequency-and-interference family of ideas—versus forcing everything into finite grids and discrete addressing the public is allowed to see.

IBM, Microsoft, and “competition” as set decoration

IBM functioned as backbone of the so-called PC revolution while performing disinterest in consumer drama—publicly downplaying consumer electronics while making sure the homebrew and kit scene could not mature into a competing legitimacy. The real work, on this reading, was order: making sure small vendors and garage builders could not crystalize a parallel stack. The IBM–Microsoft pairing bought a narrative of rivalry that still hid a shared interest in standards people could not escape, and in the scene staying governable.

The Xerox–Apple litigation theater fits the same glove: bright lights, legal language, appearance of market combat—while actual competitive pluralism (multiple stacks, multiple ownership models, multiple display paradigms) stayed impossible. Copyright and patent fences did ideological work: they trained the public to treat closed stacks as normal.

The friendly face that ate the commons

When Linux emerged as the serious open systems trunk, the counter-move did not only wear a prosecutor’s badge. It also wore a hero mask. Steve Jobs—out, then maneuvering back through NeXT into a reborn Apple—marketed polish and Unix-flavored power while retail absorbed energy that might have fed communal compatibility. Old Macs and their peripherals were stranded; neither Linux nor the legacy Mac ecology cleanly “won” from the fold-in. The draw was high-margin machines and fast product cadences that turn small engineering polish into enormous margin—catnip for artists and the music scene, who traded compatibility for glow.

Meanwhile Linux frayed: distributions multiplied into factions that barely share a userland, and the kernel world kept pace with Intel, AMD, and ARM largely on capital’s schedule, not a unified hobbyist one. Whether you call the kernel genealogy Darwin, BSD, Mach, or something else, the felt outcome for many users matched a harvest: FOSS labor and volunteer culture repackaged as luxury SKUs.

I read that pattern beside linked work on OC ReMix privatization, SDA/GDQ charity polarization, and Homestuck/Hussie—the through-line is free contribution re-platformed into IP real estate and fan management, with ethical injury that rarely maps onto case law the way corporate counsel prefers. In that light Jobs is not remembered as a quirky genius who “happened” to win; he is read as controlled-opposition grammar in a tee-shirt: cool sold as compliance, design as lock-in, rebellion as prestige pricing, fans as a managed demographic.

Predictive-programming and New World Order imagery in Apple marketing, Beatles–Apple naming rhymes, and iPod-era youth capture belong in the same paragraph: they merged music, identity, and hardware into one smooth pipeline—few vendors, few stores, Play or App temples where “everything you need” already passed tithe.

When raster won the hobby

Vector games could have remained a mainline genre inside manufacturer ecosystems; instead the industry trained buyers to hear “advancement” as “more triangles, more fill rate.” When Nintendo 64–style 3D marketing landed, 2D craft starved almost overnight—not because vector math stopped working, but because attention and silicon budget were redirected.

Counterfactual: had vector lanes stayed funded inside first-party studios, GPUs might not have needed to become furnace-class monoliths justified by synthetic benchmarks. Bitcoin mining and later LLM training then re-used that silicon grammar for purposes orthogonal to play, until graphics felt like a caption on the real workload—and “games” themselves slide toward lease and rental on someone else’s logic, not ownership of a cartridge era. Moore’s Law triumphalism reads as an insult: it pretends planar CMOS shrinking was the only physics on offer, while good analog paths were not forbidden by nature—they were forbidden by allocation. The same decades quietly funded priorities the marketing sheet never names: parallel stacks, out of frame.

The analog ghost in the digital machine

Every “digital” product rides analog carriers: modems, PHYs, filters, clock recovery, ADC/DAC bridges. The public story stops at bits; the electromagnetic substrate does not. Demodulators trained on one interpretation of a line can ignore other structure co-present in the waveform.

If that sounds paranoid, state it as a design fact: any rich medium can carry steganographic or out-of-band energy unless you own the entire measurement chain. Nation-state and cartel incentives both point the same way: use what the bill of materials already contains. A civilization that can only read vendor APIs has jailed its senses inside someone else’s enumerated list.

The deeper analog claim is simple: a database built for analog-resolved storage would not behave like binary lookup at all. In binary media, every cell is effectively yes/no and capacity scales by multiplying tiny decisions. In an analog-resolved model, each storage locus can carry a continuous waveform and therefore far denser descriptive structure than a single bit. Put plainly: optical-disc history could have gone very differently. CD, DVD, and Blu-ray are already analog physical systems constrained by digital encoding choices. A different standards path could have stored native signal fields directly (closer in spirit to magnetic tape), not only quantized yes/no streams. On this reading, one disc class could have held what now requires massive libraries.

That is why bitmap-era “progress” looks backward from this angle: the industry chose a narrow coding regime on top of an analog substrate, then called the resulting scarcity inevitable physics.

Escaping that jail is larger than swapping OS distribution. The current state of technology is a major problem that cannot be understated. In my view, the stack as deployed now will have to be dismantled and destroyed, then rebuilt under real audit from the substrate up. As a surveillance and privacy question alone, almost every electronic class now ships with exploitable seams or backdoor potential: phones, cars, planes, power plants, kids’ toys, pacemakers. We have to do better than inheritance-by-default from compromised architecture.

Where the “quantum” label points in my model

Public quantum computing is sold as qubit circuits, future speedups, and complexity-theory trophies. I treat much of that stack as misdirection that defers hope to a not-yet machine while avoiding storage and addressing physics.

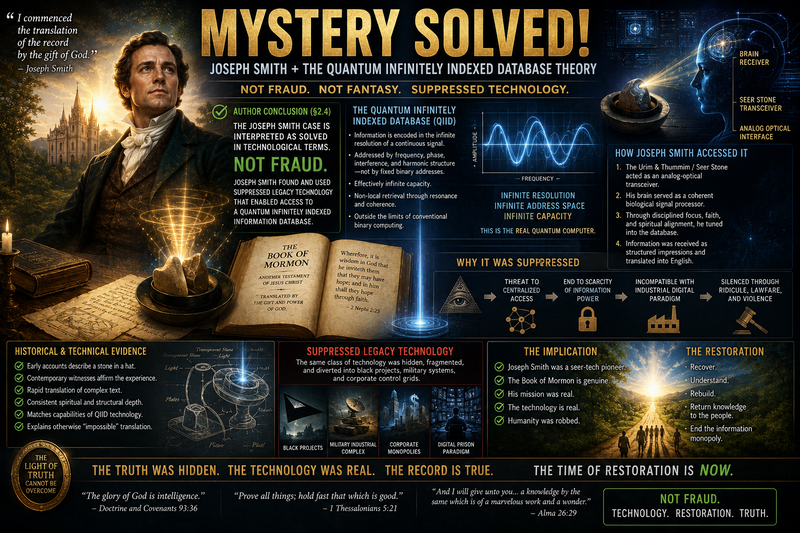

The real quantum computer, in my thesis, is closer to infinitely indexed memory: knowledge nested in frequency resolution, query-as-address, resonant lookup in principle—not faster transistors in the usual sense, but a different way information sits in a medium. Tables, mainstream contrast, Mars/Earth speculation, and science-fiction parallels sit in the infinitely indexed storage / quantum computer investigation; the preceding paragraphs only anchor vocabulary so narrative and technical notes stay aligned.

One historical lane follows from that, and I treat it as solved in technological terms: reports of crystal-memory objects preserve storage technology memory, not only myth. The Joseph Smith episode is interpreted as interaction with a signal-bearing object where “reading” occurred by resonance/telepathic coupling, not by alphabetic inscription. In that frame, conflicting retellings track extraction from different frequency strata or source impressions rather than fabrication. The claim is direct: he found suppressed legacy technology once common.

The leap we still owe ourselves

We gave industry something close to total faith and almost no audit—the way Beckett was asked to trust the next leap. LLM assistants already hint—imperfectly—what indexed synthesis feels like for one person with limited time; they do not prove my hardware story, and they hallucinate. Still, the contrast stings: private models can mobilize text faster than manual scholarship, while public infrastructure keeps posing the endpoint as a faster game console.

Where next

- Infinitely indexed storage / quantum computer — full investigation — mainstream qubit narrative vs storage thesis, author blocks in §2–§2.2, science-fiction index tropes.

- Homestuck / Hussie — controlled opposition investigation — voluntary labor, fandom capture, parallel to repackaging commons.

- OC ReMix — privatization investigation.

- SDA / GDQ / MSF — polarization investigation.

- Microchips — shrinking / suppression — complementary read on opaque silicon narrative.

- Mars hub — for readers threading breakaway tech with the Mars-versus-Earth hypothesis in the quantum storage dossier.

Framing and limits

What precedes is thesis and pattern language, not a chronological industry history with balanced accounting for every platform. Legal outcomes, kernel lineages, and executive intent are named where they serve the argument and left for specialists elsewhere. The full quantum storage dossier carries tier discipline, open questions, and Limits; hallucination risk applies to LLM-assisted research the same way it applies anywhere else.

Keywords: #QuantumLeap #VectorGraphics #AnalogSignal #ComputingHistory #WalledGarden #QuantumComputer #KnowledgeAccess #ParadigmThreatFiles

Substack: paradigmthreat2.substack.com/p/quantum-leap-of-faith

Last updated: 2026-05-06

Written and narrated by Ari Asulin, with drafting and research support from LLM agents.

Share